This blog piece was originally published on The University of Birmingham’s Social Sciences Blog (link) (May, 2018)

The Progress 8 measures of school performance compare pupils’ GCSE results across 8 subjects to those of other pupils with the same primary school SATs results. There are many reasons behind the differences we see in the scores, many of which have nothing to do with school quality. They tell us surprisingly little about school performance.

It is easy to find fault in performance measures, and there is certainly a lot of ammunition to do this in the case of the Progress 8 measures. However, in my research, I work towards improving education policy and practice, rather than merely criticising the status quo. So here are five ways to reform the school Progress measures:

1. Fix the ability bias:

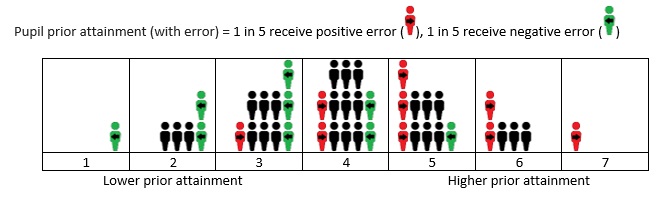

Progress measures help us see pupil progress relative to similar-ability peers in other schools. But, as my recent research has shown, error in the measures used to estimate ability means that a sizable ‘ability bias’ remains, giving a particularly large advantage to selective grammar schools. Ability bias can be rectified by looking at average school starting points as well the starting points for individual pupils (or more sophisticated methods which estimate and correct for measurement unreliability).

2. Replace or supplement Progress 8 with a measure that takes context into account:

My paper, published when the first Progress measures were introduced in 2016 found that around a third of the variation in Progress 8 can be accounted for by a small number of school intake characteristics such as the proportion of pupils on free school meals and/or with English as an additional language (EAL). Using more sophisticated measures would reveal further predictable differences unrelated to school quality (e.g. considering EAL groups, the number of years pupils are on Free School Meals, or factors such as parental education). We must guard against measures excusing lower standards for disadvantaged groups. But not providing contextualised estimates leaves us with little more than impressions on how interacting contextual factors influence outcomes and no one in a position to make data-informed judgements.

3. Create measures which smooth volatility over several years:

Progress 8 is highly affected by unreliable assessments and statistical noise. Only a tiny portion of Progress 8 variation is likely to be due to differences in school performance. A large body of research has revealed very low rates of stability for progress (value-added) measures over time. Judging a school using a single year’s data is like judging my golfing ability from a single hole. A school performance measure based on a 2- or 3-year rolling average would smooth out volatility in the measure and discourage short-termism.

4. Show the spread, not the average:

It is not necessary to present a single score rather than, for example, the proportion of children in each of 5 progress bands, from high to low. Using a single score means that, against the intentions of the measure, the scores of individual students can be masked by the overall average and downplayed. Similarly, the measure attempts to summarise school performance across all subjects using a single number. Schools have strengths and weaknesses and correlations between performances across subject are moderate at best.

5. Give health warnings and support for interpretation:

Publishing the Progress measures in ‘school performance tables’ and inviting parents to ‘compare school performance’ does little to encourage and support parents to consider the numerous reasons why the scores are not reflective of school performance. Experts have called for, measures to be accompanied by prominent ‘health warnings’ if published. Confidence intervals are not enough. The DfE should issue and prominently display guidance to discourage too much weight being placed on the measures.

Researchers in this field has worked hard to make the limitations of the Progress measures known. The above recommendations chime with many studies and professional groups calling for change.

Trustworthy measures of school performance are currently not a realistic prospect. The only way I can see them being informative is through use in a professional context, alongside many other sources of evidence – and even then I have my doubts.